Uptick in Anti-AI Terrorism

Narrative gets you to the IPO. Narrative makes everyone hate you.

Welcome to Young Money! If you’re new here, you can join the tens of thousands of subscribers receiving my essays each week by adding your email below.

Now that I am again writing this newsletter on a regular cadence, I’ve asked myself, “What should the current iteration of Young Money look like?” The equilibrium I’ve reached is treating Monday mornings as the time to publish my thoughts on the “current thing(s)” in tech / markets / whatever is dominating my Twitter feed, while publishing non-regular, less timely blog posts as they take root in my brain. To today’s piece.

Yesterday, someone pulled up to Sam Altman’s house in San Francisco and fired a gun at the property. Three days ago, someone threw a molotov cocktail at Sam Altman’s house in San Francisco.

It goes without saying that these are horrible, disgusting attacks against Altman and his family, and the freaks that perpetrated them should be punished by the fullest extent of the law.

These were also not, in my opinion, isolated and/or “random incidents.”

The man who threw a molotov cocktail at Altman’s home is 20-year-old Daniel Alejandro Moreno-Gama. Moreno-Gama was an active member of “PauseAI,” an organization whose name is self-explanatory. In the “PauseAI” Discord chat, he had sent messages including “Is there any sort of timeline for how long humanity has left?” As an 18-year-old college student, he emailed congressman Dan Crenshaw addressing the group’s concerns with AI labs not taking safety and alignment seriously. Last week, he threw a molotov cocktail at the home of OpenAI’s CEO.

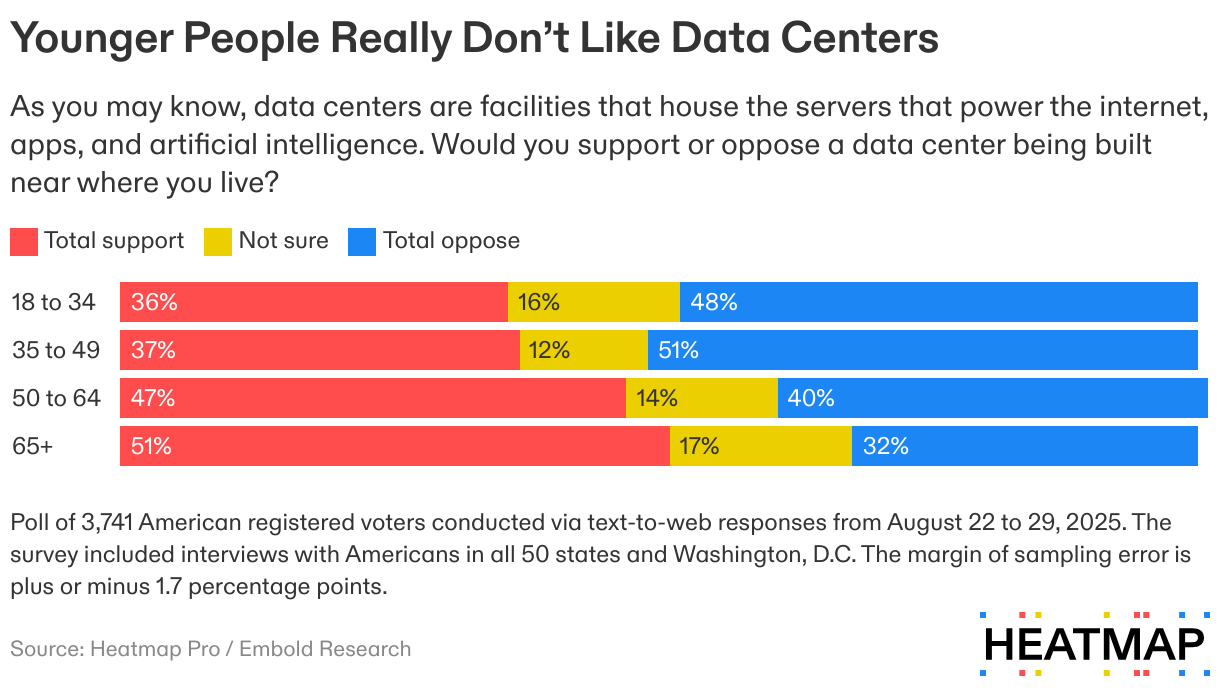

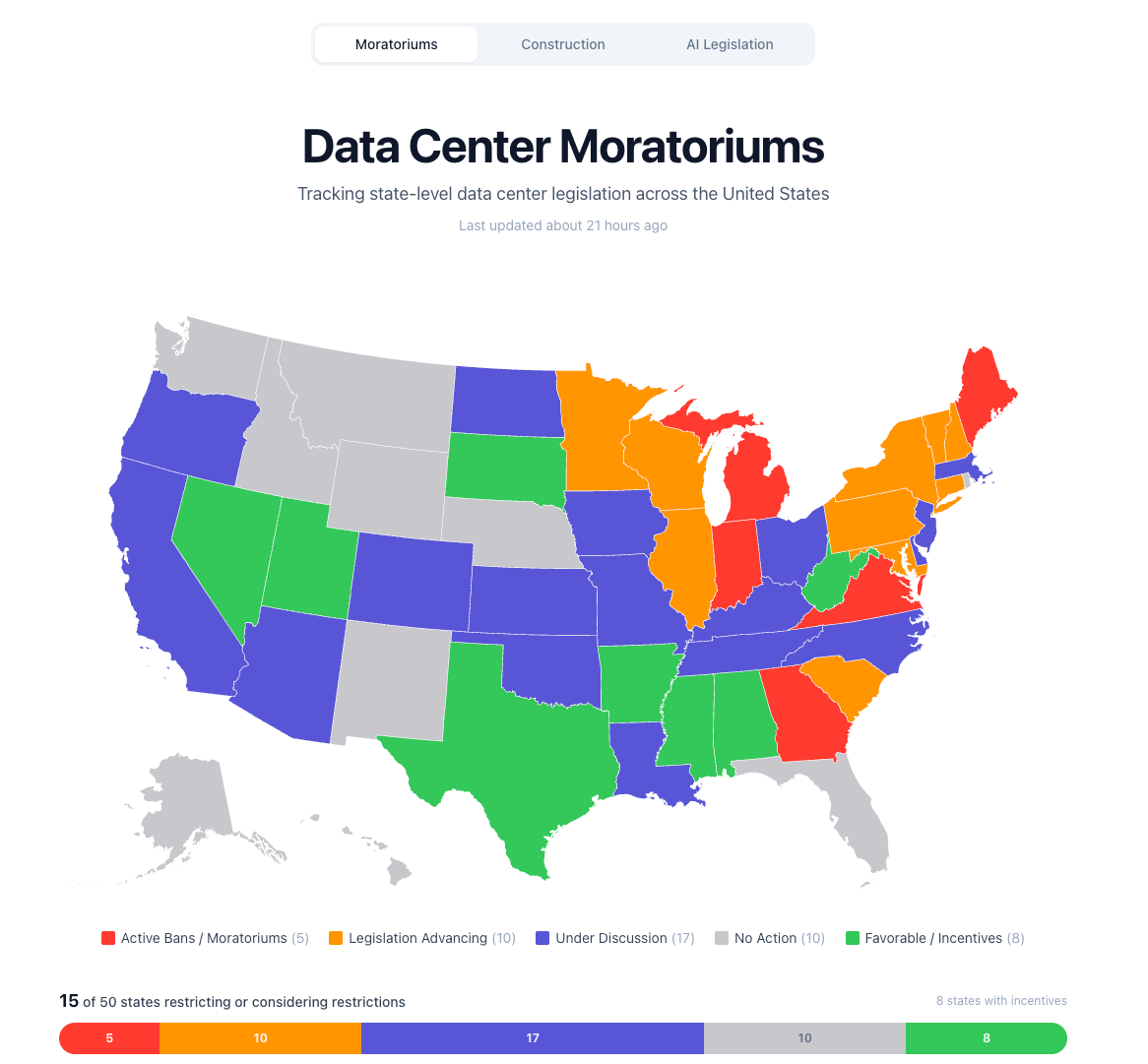

Meanwhile, Americans in multiple states are actively protesting, and closing down, data center construction projects. In January, NPR reported that protesters in Virginia, Pennsylvania, and North Carolina have shut down proposals for new data center buildouts. A town in Wisconsin tried to can their mayor for approving a new data center. Maine banned new data center until November 2027, after seeing their total cost of electricity bills spike 60% between 2021 and 2026 (they also, notably, banned nuclear reactor construction, which may have helped with power costs, but I digress). Virginia, the top state for data center construction, booted its GOP governor and elected a democrat who promised to “make data centers pay their own way for power.” It turns out that everyone, especially young people, dislike data centers, and that disdain carries across both sides of the political aisle, per Heatmap News.

Democrats hate data centers because of their environmental impact. Republicans hate them because they represent big tech forcing higher power bills upon Americans. Zoomers hate them because.. vibes?

In Oklahoma, 21-year-old GOP organizer Kennedy Laplante Garza started fighting a nearby data center proposal known as Clydesdale after learning over the summer that it would be built a mile from her family’s farm. “I didn’t even know that much about data centers at that point,” she told me. “But I knew my friends across the state were fighting similar things, whether they were solar panels or wind turbines.”

Will Manidis made a site tracking data center moratoriums in the US by state. Guess what? five states have active bans, ten have legislation advancing, and seventeen are under discussion.

The majority of Americans “hate” AI. Of course, that shouldn’t be a surprise when the CEOs of three of the biggest AI labs in America are all basically saying the entire white collar labor force is just a few years away from getting brutally job-mogged by LLMs:

May 2024: Elon Musk says AI will take all of our jobs.

May 2025: Dario Amodei tells Axios that AI could wipe out half of all entry-level white collar jobs and spike unemployment to 10-20% in the next 1-5 years.

October 2025: Sam Altman says AI could eliminate jobs that ‘aren’t real work,’ implying that many of today’s white collar jobs are, to put it bluntly, “fake.”

January 2026: Dario Amodei predicted that humans would be unable to adapt to AI development’s rapid pace, and this would trigger an “unusually painful” short-term shock in the labor market.

January 2026: Elon Musk says that in 10 - 20 years, “work will be optional.”

March 2026: Sam Altman admits that AI is killing the “labor-capital balance.”

It turns out when you tell people, “Hey, we’re building mega technology, it’s going to take your job, and, by the way, you’re going to help pay for it with a higher power bill,” people get pissed off and/or anxious. Some of those people will act on that anxiety and/or anger by protesting data centers, but, in a population exceeding a minimum size, there will be outlier, left-tail individuals who decide to take matters into their own hands, threatening to destroy infrastructure, or, in the case of Altman, threatening the leaders of AI themselves.

There is a very, very good blog post by Tim Ferriss called 11 Reasons Not to Become Famous that is, I think, relevant when thinking through these attacks.

Think back to your 5th-grade class. In my case, there were 20–30 kids. Was there anyone totally off the rails in your class? For most of you, there’s a decent chance kids seemed pretty sane. It’s a small sample size.

Next, think back to your freshman year in high school. In my case, there were a few hundred kids. Was there anyone volatile or unbalanced? I can think of at least a handful who were prone to violence and made me uneasy. There were fights. Some kids brought knives to school. There was even a kid rumored to enjoy torturing animals. Keep in mind: this high school was in the same town as my elementary school. What changed? The sample size was larger.

Flash forward to my life in July of 2007, less than three months after the publication of my first book.

In that short span of time, my monthly blog audience had exploded from a small group of friends (20–30?) to the current size of Providence, Rhode Island (180,–200,000 people). Well, let’s dig into that. What do we know of Providence? Here’s one snippet from Wikipedia, and bolding is mine:

Compared to the national average, Providence has an average rate of violent crime and a higher rate of property crime per 100,000 inhabitants. In 2010, there were 15 murders, down from 24 in 2009. In 2010, Providence fared better regarding violent crime than most of its peer cities. Springfield, Massachusetts, has approximately 20,000 fewer residents than Providence but reported 15 murders in 2009, the same number of homicides as Providence but a slightly higher rate per capita.

The point is this: you don’t need to do anything wrong to get death threats, rape threats, etc. You just need a big enough audience. Think of yourself as the leader of a tribe or the mayor of a city.

Tim goes on to explain the various death threats, stalker incidents, and psychotic messages he’s had to deal with since his first book blew up. And Tim Ferriss isn’t particularly controversial or mega-famous; he’s a moderately well-known podcaster known for his life optimization and bio-hacking content.

The leaders of the AI labs have millions of Twitter followers, and, in 2026, information spreads across the internet like wildfire, meaning the total “audience” for any message involving any major figure in AI is likely in the billions, or, at minimum, hundreds of millions. And that message, right now, is that AI is about to blow up your way of life and displace most of the white collar labor force.

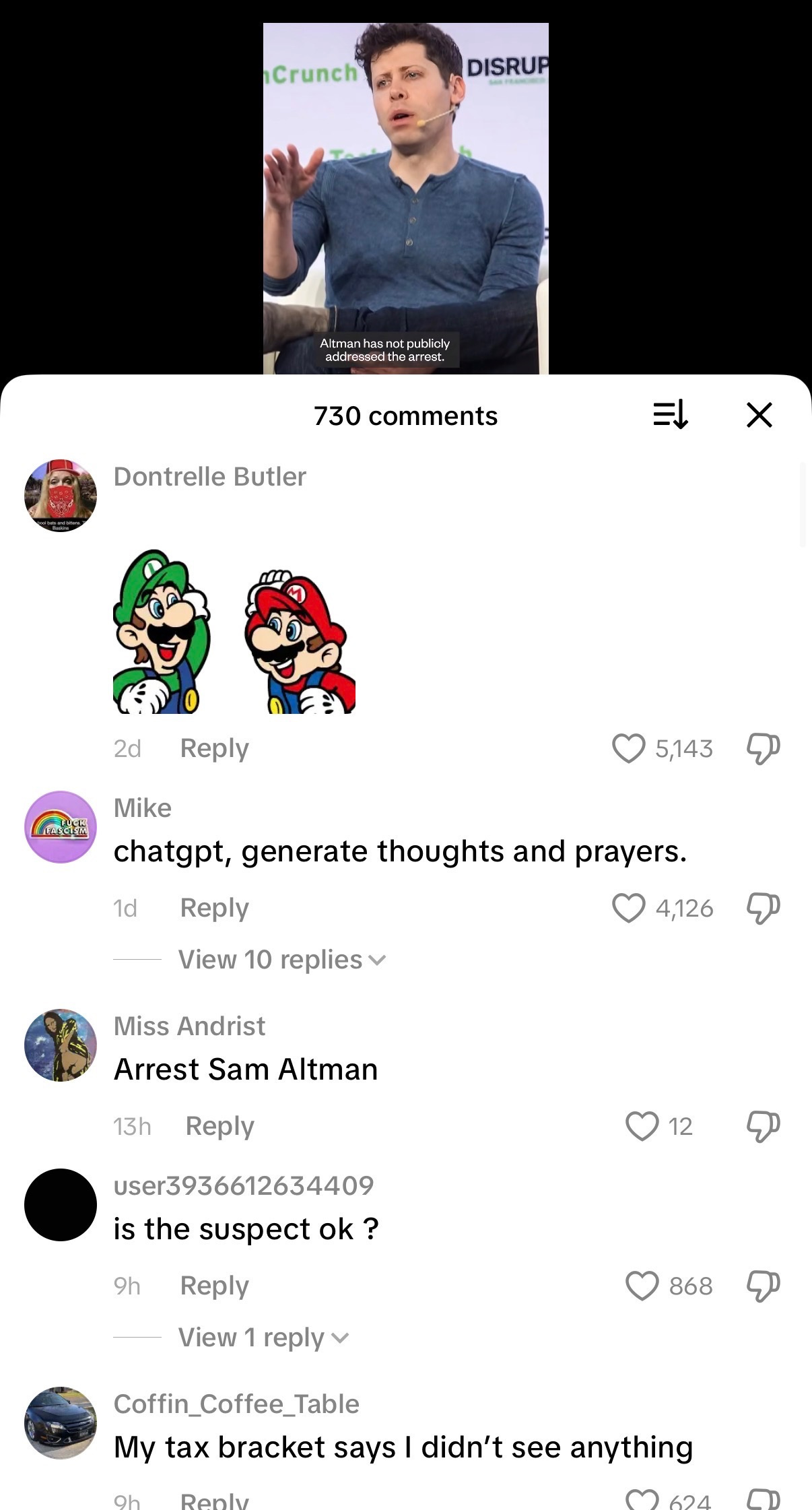

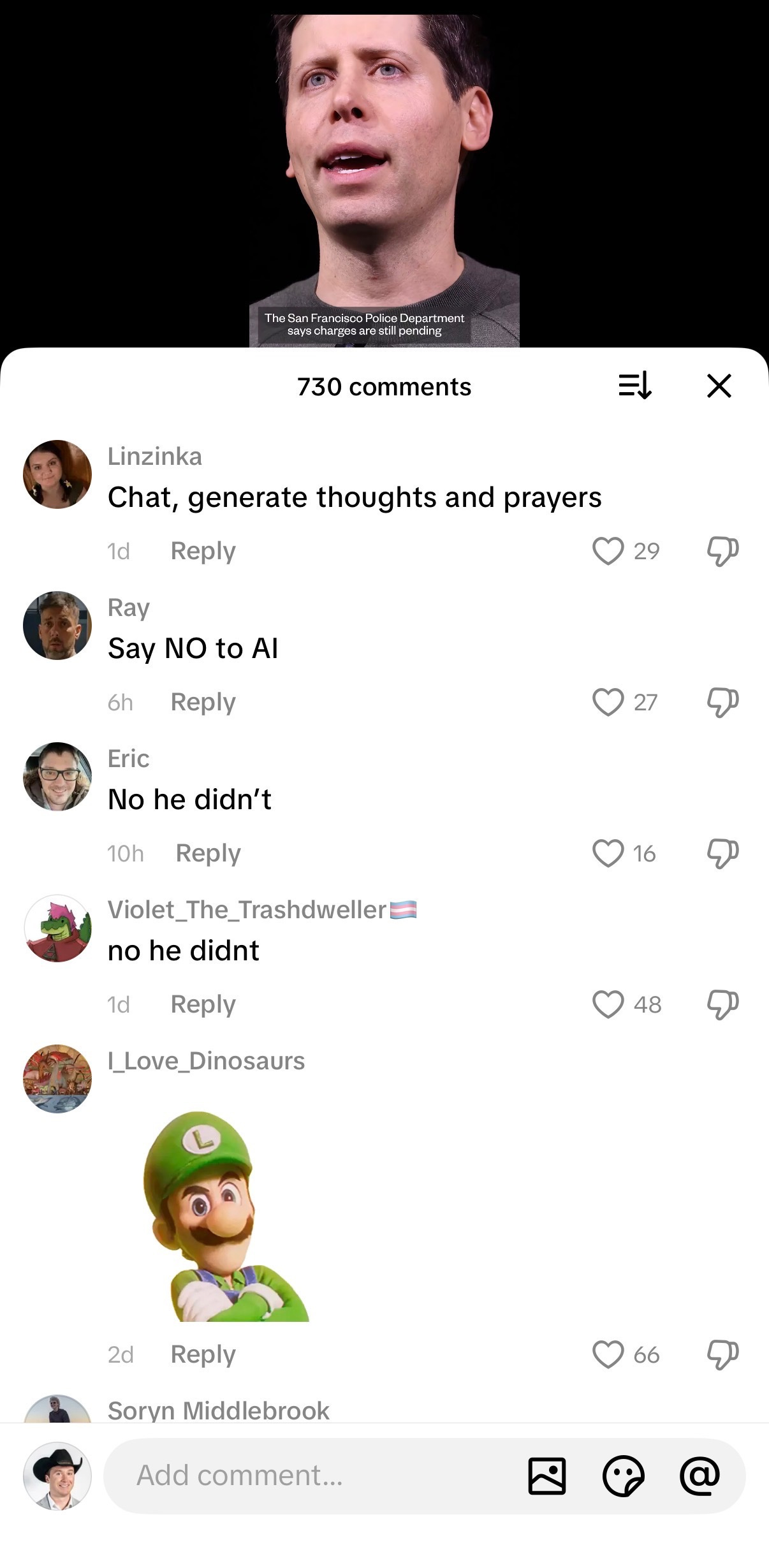

Say that “audience” in America alone is 100 million people. Even 1% of 1% of that audience is 10,000 potentially unhinged actors off their meds going through some version of psychosis. And you just never know when one of these psychos is going to act on their impulses. Particularly if that psycho is a frustrated young male. Who threatened to blow up an xAI datacenter last spring? A 25-year-old male. Who threw the molotov cocktail at Altman’s home? 20-year-old male. Remember Luigi Mangione? Yeah. Young male.

Young males are, compared to other demographics, prone to violence, disorder, and destruction if their life is otherwise in disarray. And people really, really hate AI. Look at the TikTok comments on posts about Altman’s home being attacked. It’s a mixture of satire, crude irony, support for the attacker, and allusions to Luigi Mangione.

Take nation-wide AI psychosis, disgruntled young males, online communities festering AI resentment behind closed doors, fundamental misunderstandings of what this technology can actually do, and narratives that spread like wildfire, far outpacing whatever the “truth” might be, and you have a pressure cooker for a few insane folks to take a vigilante approach to defending their ideals, no matter how false those ideals might be.

I mean, come on, Luigi Mangione was a mentally unwell 20-something dude who committed a horrific act in killing a married father of two, and he’s still a cult hero amongst internet incels who think Mangione was standing up for something. But this is, in fact, the world we’re living in today. Guess what? Narratives, even if they’re false, can be powerful, and, through that power, dangerous if they frighten the wrong soon-to-be bad actor. “The American health insurance industry is preying on you.” Is that true? Who knows. Someone thought it was, and they murdered a healthcare executive over it in Midtown, Manhattan.

Is AI going to “kill white collar jobs?” No. AI, and, more specifically, LLMs, can do a lot of things well. It’s great at generating code! It absolutely can churn out code, read through documents, and write prose in, basically, no time, and it can scale, theoretically, to infinity. That is very useful. But AI does not have activation energy, agency, desire, or will power. It’s not nuanced, though it can mimic nuance. It doesn’t “know” or “remember” things like humans, though increasingly large context windows allow it to “recall” increasingly impressive amounts of information. But there’s so much subtle friction in, well, most jobs, that I’m just bearish on the idea that AI will, at some point, “do everything.”

But the narrative being pushed by every player in AI is that AI will, in fact, eat the entire world. And there’s nothing anyone can do about it. Is that narrative true? I mean, I’m personally bearish on any concept of “ASI” or “AGI,” but you need a narrative when you’re trying to go public at what will likely be that $1 trillion+ valuation.

The bigger the valuation, the bigger the narrative needed, particularly when you’re still years from profitability, access to compute is increasingly “the only thing that matters,” and the difference between frontier models is marginal at best. And the only narrative that can support what will likely be multiple loss-making trillion-dollar companies in the public markets is that we’re $500 billion in compute spend away from ending “work” as we know it.

I do think Anthropic’s founders actually believe in an AGI and ASI future. I do not know if OpenAI’s executives believe it, or if they are simply aware that they have to sell this vision to keep raising capital. It doesn’t really matter. At the end of the day, all of the talking points basically boil down to, “We’re raising to terminate all of your jobs. Tbd on what comes after that.”

Silicon Valley’s ethos is “accelerate or die.” Most of America just wants to earn their living wage and watch their kid’s baseball game on the weekends. Folks don’t want to be “liberated from white collar work.” They just want to put in their hours, get their check, and do whatever they want in their free time.

The AI narrative of “We’ve built the thing that’s going to eradicate your jobs” is antithetical to the long-standing unspoken agreement between capital and labor in America: “Do your work, and you’ll earn enough money to live a good life.”

Now it’s, “We are replacing your work. Maybe you’ll get UBI or something. Have fun in the permanent underclass.” Again, I am “short” the idea that AI is actually going to take all of our jobs. But if you tell the same story over and over again, do you really think the very people whose jobs and power bills are threatened are just going to sit by and think, “Ah well, I guess I should ride out the next five years, then good luck.”

No. People, unlike LLMs, have agency.

When threatened, or at least when they perceive themselves to be threatened, the far left tail actors will, in fact, act. And each actor emboldens more actors. A protest in one town shuts down a datacenter? More protestors will appear in other towns. The momentum accelerates. Someone throws a molotov cocktail at Altman’s home? Someone else comes by two days later with a gun. Every vigilante spawns a new vigilante, and the vigilantes think they’re the good guys stopping the “evil overlords” of AI.

I’ve seen a lot of takes on Twitter blaming journalists for stoking flames of resentment against Altman and AI industry in general. And, yes, sure, there have been plenty of pieces painting these folks in bad lights, and, yes, sure, some of these pieces were likely in bad faith. But when your talk track for two years has been “AI’s going to eradicate the income stream that supports your family,” I don’t think it’s that crazy that some folks might take that threat seriously, and act on their desperation in desperate ways.

A lot of investors are worried about training costs, long-term margins of increasingly-commoditized frontier models, and access to GPUs in 2029. I’m worried that some asshole from rural Arkansas is going to spend too much time on Reddit and then go blow up a datacenter in Memphis to “save the world.”

Anyway, happy Monday. Let’s churn through some tokens.

Content Recs:

For my SF folks: I’m co-hosting a tech / VC / startup breakfast with my buddy Morgan Barrett on Tuesday AM. If you’re around, come hang!

Former US Senator Ben Sasse, a great Twitter follow and also rarity of a good person in Congress, is dying of stage four cancer. But before he dies, he’s also hitting the podcast circuit hard. This conversation with Ross Douthat at the New York Times is a really, really good read / listen.

Hilarious project that someone made all “NYC lines” that is, literally, monitoring “lines” at popular NYC restaurants. Shout out vibe coding. Here’s the website link: damnlines.com

Really fun piece in the New York Times by John Carreyrou on finding the creator of Bitcoin.

- Jack

I appreciate reader feedback, so if you enjoyed today’s piece, let me know with a like or comment at the bottom of this page!

I wasn't aware of the recent threats and attacks on Sam Altman. My thoughts immediately went to the CEO of United Health, and I can't help but wonder who will want to publicly represent their company if it means accepting a very real target on your back.

Tech figures like Thiel and Andreessen have not helped the conversational tone either. They are advocates of technological determinism and associate with thinkers suggesting the limiting of human agency (democracy).